Google BigQuery Integration

In this article, we’ll integrate a Google BigQuery Integration with Y42.

BigQuery is Google's serverless, petabyte-scale data warehouse for analytics. With BigQuery, you can run analytics over large amounts of data using standard SQL queries.

Overview

Authentication

To use a service account from outside of Google Cloud, such as on other platforms or on-premises, you must first establish the identity of the service account. Public/private key pairs provide a secure way of accomplishing this goal. When you create a service account key, the public portion is stored on Google Cloud, while the private portion is available only to you.

In order to setup get the key to connect your Google BigQuery as an integration, you will need to have a Google Cloud account and a service account. A "Service account" is an identity that an instance or an application can use to run API requests on your behalf.

Make sure the service account has the required permissions:

- BigQuery admin

- Storage admin

- Storage object admin

After that, you may go to the section "Keys". Add a new key for this service account by clicking on "Add Key" and create a private key. Please download the file that contains the private key as JSON file and store this file securely because this file can't be recovered if lost. After having downloaded the private key as a JSON file, you can upload it to authenticate your BigQuery integration.

Import Settings

None.

Schema

Dynamic Schema is a schema which will be dynamically loaded at run-time.

Updating your data

For this source only a full import is possible. You have the option of scheduling updates by the month, weeks, days, and even by the hour.

BigQuery Setup Guide:

Note: In order to connect BigQuery with Y42, you will need to have a Google BigQuery Account.

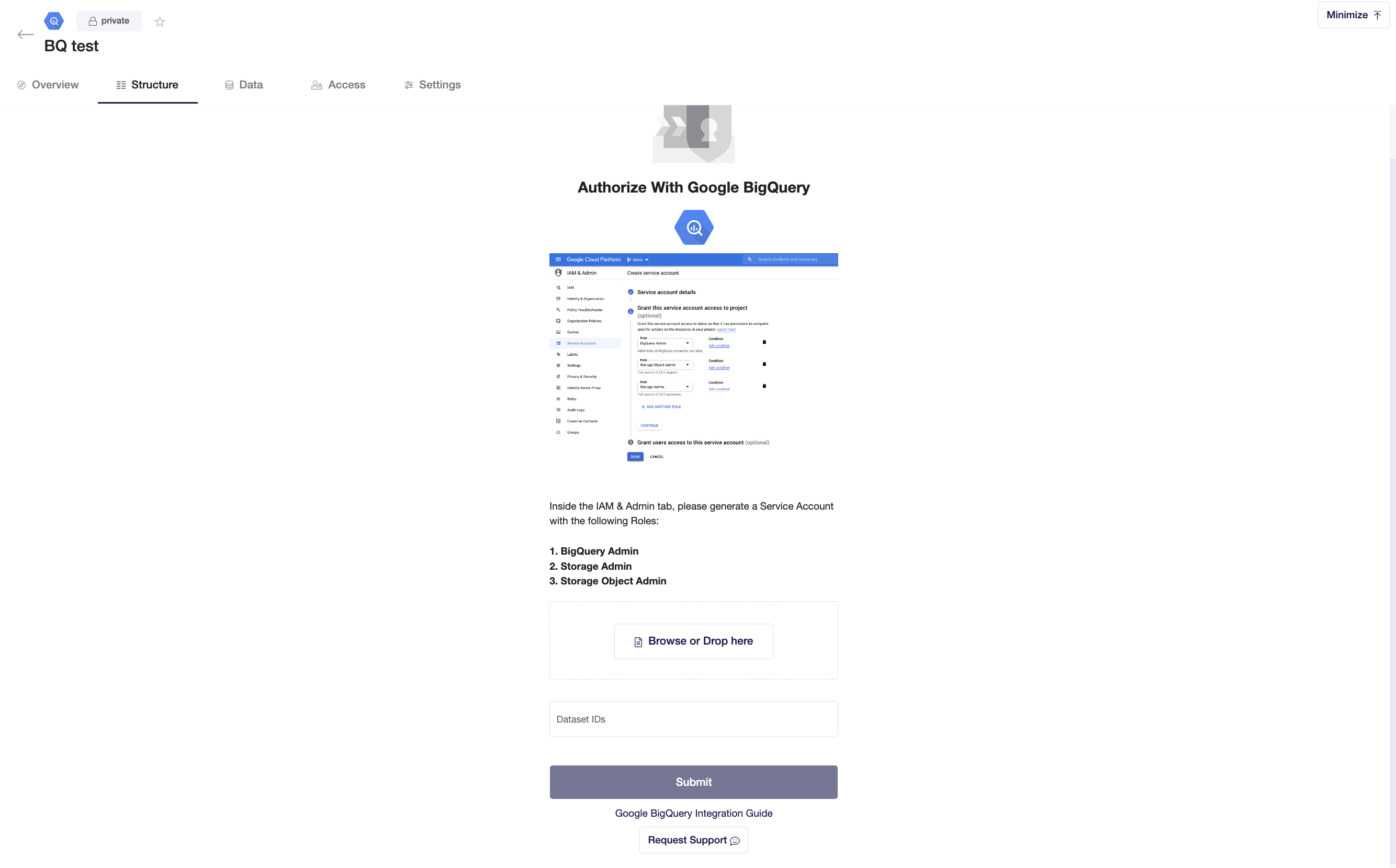

- In Big Query, inside the IAM & Admin tab, please generate a Service Account with the following Roles:

a) BigQuery Admin

b) Storage Admin

c) Storage Object Admin

(For more info following the links here & here) - Go to Integrate in Y42, click on "Add..." to search for Google BigQuery and select it.

- Add a name for your integration.

- Insert the generated JSON file from Big Query

- You can specify which dataset you want to include in your integration. Datasets in Big Query are top-level containers that are used to organise and control access to your tables and views.

- Click Submit.

- Select the tables you need and click import. You can start accessing the tables once the status is “Ready”.

Note: You can always import and reimport other tables as well, or delete them.